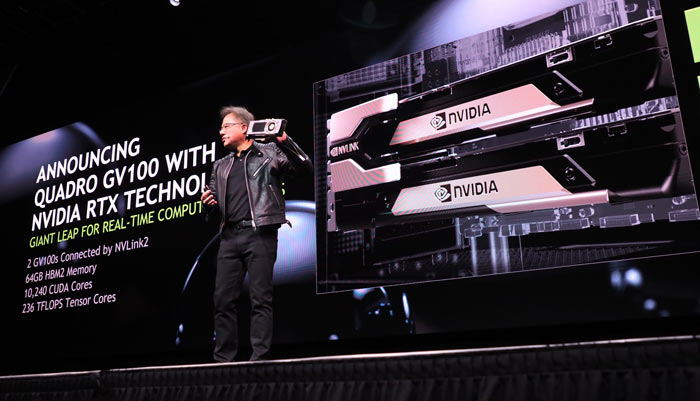

Consumer grade GPUs from Nvidia have long enjoyed cutting-edge real-time ray tracing hardware along with dedicated silicon for machine learning and artificial intelligence. As is usually the case, newer technologies make it into the general consumer space first, with professional workstation hardware favoring stability and compatibility above all. Weve seen some of the technology from the Nvidia RTX line make it to the Quadro line with cards like the Quadro RTX 8000, but the Quadro GV100 is a different beast entirely.

Doing a Volta

The GV100 uses the Volta architecture, which diverges from the Turing architecture in a number of important ways. So trying to compare the Quadro RTX cards with their Turing-based chips isnt so much a matter of which is better. Rather its a question of which is more fit for purpose.

Comparing the GV100 to the top Quadro RTX 8000 card shows massive differences in certain types of computational tasks. For scientific and engineering users one of the most important figures to pay attention to is double-precision floating point performance. The GV100 offers 7.4 TFLOPs of double precision compute power. The Quadro RTX 8000 only musters about half a TFLOP at that precision level. In fact, Nvidia doesnt list a double-precision figure for the Quadro RTX 8000, since it clearly isnt designed for it.

The GV100 is clearly the only card worth considering if you need to do any work that involves double-precision calculations. Even the single-precision figure for the GV100 is only slightly behind that of the RTX 8000.

Thanks For The Memory

The other major specification that sets the GV100 apart from other Quadro cards that also feature AI and ray tracing is its memory. While the RTX 8000 uses GDDR6, with a 384-bit bus. The GV100 on the other hand uses HBM2 (high bandwidth memory) with a massive 4096-bit bus. The 8000 hs 48GB of memory versus the 32GB on the GV100. The total throughput on the GV100 is about 200GB/s more than the RTX 8000, which is about 33% better. However, that relatively modest memory throughput gain isnt the whole story. Having such a wide bus allows for much better parallel operation, exactly the sorts of super-computing tasks the GV100 is designed for.

More Than The Sum Of Its Parts

The value of the GV100 also comes from the many perks Nvidia offers to owners of their hardware. Deep Learning frameworks developed by Nvidia are open to owners of the card and of course the AI-specific tensor cores on the GV100 offers a massive boost to both AI training and inference. Taken as a whole, its hard to think of any other GPU product that offers this specific mix of hardware and software features on the market. If your workload needs double-precision calculations, machine learning or massive memory bandwidth, this is the only game in town.

As A Daily Driver

Unlike the Nvidia Tesla cards that only work for computational purposes, the GV100 also has to work as a normal graphics card. In that sense the GV100 is also formidable. It has no fewer than four DisplayPort 1.4 connectors, supporting four 5120x2880 monitors at 60Hz or four 4086x2160 monitors at 120Hz. Despite being out since 2018, there is simply no other professional GPU solution on the market that matches what the GV100 can do as a total package.